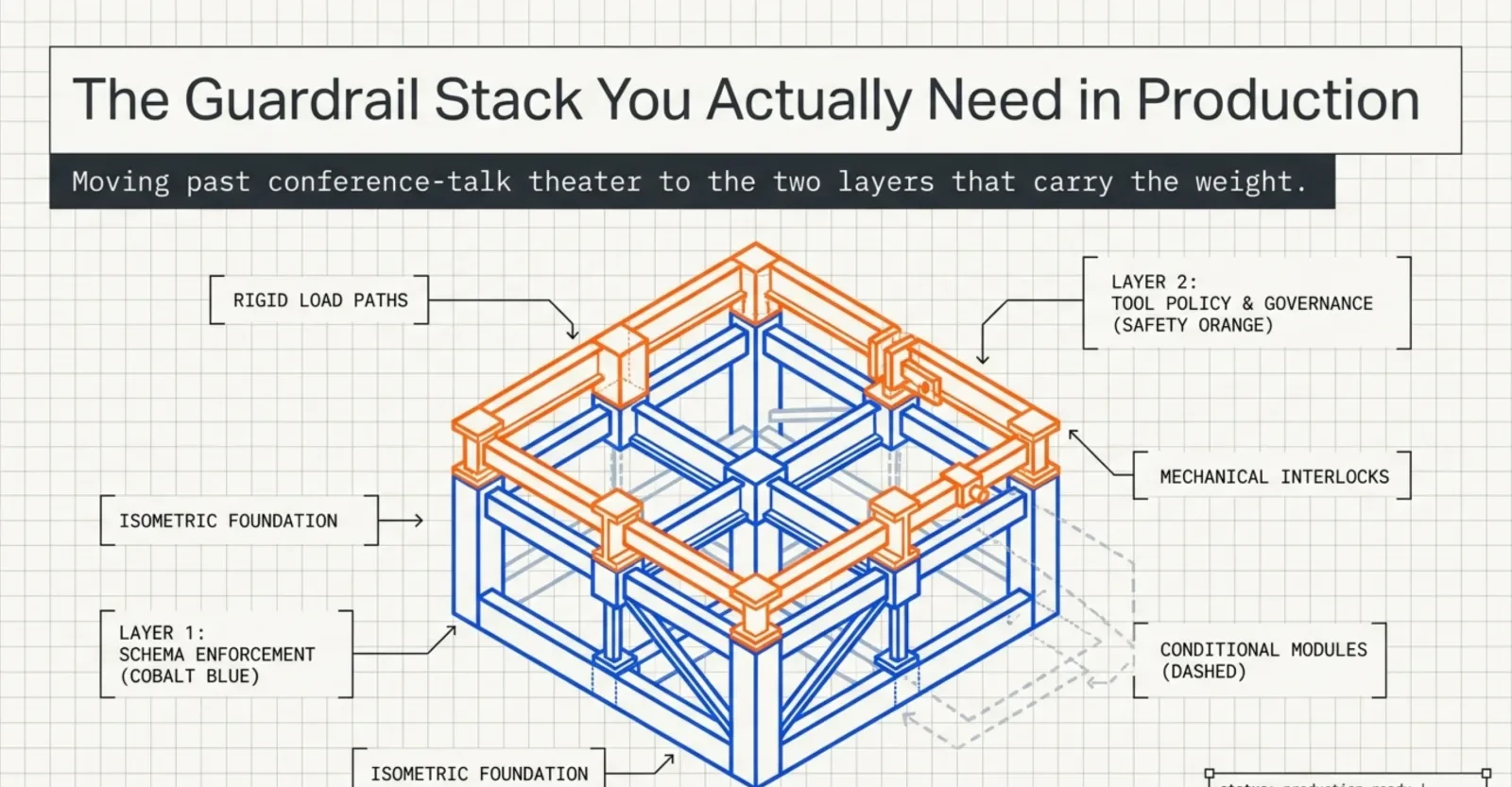

The Guardrail Stack You Actually Need in LLM Production

Five layers of LLM guardrails get talked about. Two of them carry the weight in most shipped features: output schema validation and tool/action policy. The other three are mostly theater until specific triggers fire. A practical breakdown drawn from running LLM features in production.

Every conference talk on production LLMs lists the same five guardrail layers: input filtering for prompt injection and jailbreaks, output filtering for PII and schema, a hallucination boundary, a tool and action policy, and human-in-the-loop on the dangerous bits. The slide is always the same. The implication is always the same. Build all five or you will be sorry.

In practice, two of those five carry the weight. The other three are theater for most shipped features — useful eventually, but not the thing standing between your feature and a 2 a.m. incident.

This post is the honest version of the guardrail diagram. Which two layers are non-negotiable on day one, what they actually look like in code, and what triggers force you to add the others.

The five layers, briefly

Before throwing three of them under the bus, here's the full list so we know what we're talking about:

- Input filter — detect prompt injection, jailbreak attempts, off-topic input before the prompt is built.

- Output schema validation — guarantee the model's response parses into the structure your code expects.

- Hallucination boundary — verify factual claims against a source of truth, force citations, refuse on low confidence.

- Tool and action policy — control which tools the model can call, with which credentials, and which calls require a human.

- Human-in-the-loop — a person approves or edits the model's output before anything irreversible happens.

The two doing real work are #2 and #4. Everything else is conditional.

Why these two, and not the others

A guardrail earns its place by the question "what breaks if I don't have it." For most LLM features the honest answer collapses to two failure modes:

- The model returns text my code can't use. No schema validation, no feature. The pipeline crashes on the first malformed JSON and your users see a generic error.

- The model performs an action I didn't intend. No tool policy, the agent sends the wrong email, writes to the wrong table, posts to the wrong channel. Cleanup is manual and expensive.

The other three layers protect against failure modes that only matter once a specific trigger fires: untrusted multi-tenant input, regulated data, or a use case where a single hallucinated fact is the product (medical, legal, financial advice). Most internal-facing features and most B2C features without those triggers don't need them on day one.

That's the framing. Now the deep dive.

Layer 1 — Output schema validation

This is the guardrail that turns "interesting demo" into "production feature." Without it, your LLM call returns a string and your code hopes for the best. With it, every successful call yields a typed object you can pass to the rest of the system, and every failed call fails loudly in one place.

The pattern

A complete output guardrail has four parts:

- A typed schema describing the exact structure you expect.

- A request that nudges the model to comply — JSON mode, tool-call schema, or

response_format. - A parser that validates the response against the schema.

- A repair-and-retry loop for the cases where step 3 fails.

In Python, that ends up looking like this:

from pydantic import BaseModel, ValidationError

import json

from json_repair import repair_json

class JobFitResult(BaseModel):

score: int # 0-100

matches: list[str]

gaps: list[str]

recommendation: str # "apply" | "skip" | "tailor_first"

def parse_fit_result(raw: str) -> JobFitResult:

try:

return JobFitResult.model_validate_json(raw)

except ValidationError:

# Models love trailing commas, single quotes, half-quoted keys.

repaired = repair_json(raw)

return JobFitResult.model_validate_json(repaired)

The json_repair fallback is the unglamorous part of the pattern. Models do return malformed JSON — trailing commas, single quotes, a stray \n inside a string. A library that fixes the ninety-percent case before you give up is worth more than a smarter prompt.

The retry loop

Repair handles syntax. It does not handle semantics. If the model returns a score of "high" instead of 85, no amount of repair will save you. For that you need a retry that hands the validation error back to the model:

def fit_with_retry(prompt: str, max_retries: int = 2) -> JobFitResult:

messages = [{"role": "user", "content": prompt}]

for attempt in range(max_retries + 1):

response = llm_call(messages)

try:

return parse_fit_result(response)

except ValidationError as e:

if attempt == max_retries:

raise InsufficientJobDataError(str(e))

messages.append({"role": "assistant", "content": response})

messages.append({

"role": "user",

"content": f"Your previous response failed validation: {e}. Return only the corrected JSON.",

})

Two retries is the sweet spot in practice. Three is paying for variance. Beyond that the prompt is the problem, not the model.

The error type matters

InsufficientJobDataError is the second piece most teams forget. When the LLM genuinely can't produce a valid result — the input is too thin, the page didn't load, the description is gibberish — you want a typed error you can catch upstream, not a ValidationError bubbling up from deep inside the parser. The whole point of schema validation is that downstream code stops needing to defensively check the LLM's output. That promise only holds if failures surface as a small, named set of exceptions.

Why this is the load-bearing layer

Every other LLM concern is conditional. This one is not. If the model returns text and you don't validate it, you have written code that will eventually crash on a user-visible path, and the crash will be intermittent and hard to reproduce. Schema validation is the layer that makes the rest of the system possible to reason about.

Layer 2 — Tool and action policy

The moment you give an LLM a tool — send email, write to the database, post to a channel, file a ticket, charge a card — the failure mode escalates from "feature broken" to "harm done." This is the layer with asymmetric blast radius. A bug in schema validation gives the user a 500. A bug in tool policy sends the wrong email to the wrong person and you can't take it back.

Four sub-layers

A real tool policy has four parts, in order of how much harm they prevent:

- Tool allowlist per agent. An agent that only needs

read_calendaranddraft_emailshould not havesend_email,delete_event, or anything else in its registered tools. The list is hard-coded, not dynamic. - Scoped credentials. The token the LLM-driven service uses must have the minimum scope that lets the allowed tools work and nothing more. A draft-only flow uses a draft-only token. There is no "we'll just be careful" version.

- Human-in-the-loop on side-effectful tools. Anything that changes external state — sends a message, writes to a shared resource, costs money — should produce a draft for human approval, not execute directly.

- Idempotency keys on retry-able actions. If the agent retries because of a timeout, the second call should be a no-op, not a duplicate.

The drafts table pattern

The cleanest way to put this together is the drafts table. The LLM doesn't send, it drafts. A separate, non-LLM code path executes the draft after a human approves.

class DraftEmail(Base):

__tablename__ = "draft_emails"

id = Column(UUID, primary_key=True, default=uuid.uuid4)

to_address = Column(String, nullable=False)

subject = Column(String, nullable=False)

body = Column(Text, nullable=False)

status = Column(String, default="pending") # pending, approved, sent, rejected

approved_by = Column(String, nullable=True)

approved_at = Column(DateTime, nullable=True)

sent_at = Column(DateTime, nullable=True)

idempotency_key = Column(String, unique=True, nullable=False)

created_at = Column(DateTime, default=datetime.utcnow)

Two things are worth noticing in this shape:

- The LLM only writes rows. It never has the credentials to actually send. The send path is a separate, audited function that requires

status='approved'. idempotency_keyis unique. If the agent retries the draft creation because the network blipped, the second insert fails cleanly instead of producing two emails for one intent.

Dry-run as a first-class mode

When you do hand the agent the ability to execute, do it through a dry-run flag the executor honors:

def execute_tool(tool: str, args: dict, dry_run: bool = True) -> dict:

spec = TOOL_REGISTRY[tool] # raises if not allowlisted

spec.validate(args)

if dry_run:

return {"would_call": tool, "with": args, "side_effect": spec.side_effect}

return spec.execute(args)

Dry-run is what lets you watch the agent operate in production without authorizing any of its actions. You read the dry-run log for a week, gain confidence, then flip the flag for the safe subset of tools and keep the dry-run for the dangerous ones forever.

Why this is the second load-bearing layer

The cost of a bug here scales with the blast radius of the worst tool you've registered. For an agent that only reads, a bug is annoying. For an agent that can write or send, a bug is an apology email to a customer and possibly an incident review. The asymmetry is what makes this non-negotiable the moment you cross from generation to action.

The other three — when the trigger fires

The remaining layers are real, and you do eventually need them. They are not theater forever — they are theater until a specific trigger fires, and then they are essential.

Input filter (prompt injection, jailbreaks)

Add this layer when:

- Your prompt incorporates untrusted external content — emails, web pages, documents uploaded by users — that a third party can craft.

- You have a multi-tenant product where one user's input reaches another tenant's prompt context.

- You expose a public chatbot whose system prompt or tools are valuable enough to be worth extracting.

Until one of those is true, prompt-injection defense is a research project. A B2B feature that summarizes a user's own data for that same user does not have an injection problem in any meaningful sense.

Output PII filter

Add this layer when:

- You handle regulated data — health, financial, legal — under HIPAA, GDPR, or sector-specific rules, and the LLM might surface fields that should be redacted.

- You log model outputs and the logs are accessible by people who shouldn't see customer PII.

- You ship outputs to third-party tools (analytics, training data pipelines) that broaden the audience.

If your output goes back to the same user who provided the input, redacting their own PII is at best confusing and at worst breaks the feature.

Hallucination boundary

Add this layer when:

- A single hallucinated fact is the product breaking — medical advice, legal references, financial recommendations, citations in research.

- You operate a RAG system where the contract with the user is "answers come from these documents." Retrieval grounding plus a refuse-on-low-confidence policy is the minimum.

- You publish machine-generated content that gets indexed and read as authoritative.

For most assistive features — drafts, summaries, suggestions a human reviews — a small hallucination rate is annoying, not catastrophic. Adding a hallucination boundary before that's true buys you complexity, slower responses, and a meaningfully worse product.

The shipping triage

If you're starting an LLM feature today and you want to know what to build first, the order is:

- Output schema validation, on day one. Pydantic, JSON mode or tool-call schema, repair, retry, typed errors.

- Tool and action policy, the moment the agent does anything other than generate text. Allowlist, scoped credentials, draft-then-approve for side-effectful tools, dry-run mode, idempotency keys.

- Everything else, when its trigger fires. Untrusted input → injection defense. Regulated data → PII filter. Factual contract → hallucination boundary.

The five-layer slide is correct as a long-run map. It is wrong as a starting checklist. Two layers carry the system; the others carry specific risks. Treating them all as equally urgent is how teams spend a quarter on guardrails and ship a feature whose actual problem was that the JSON parser was crashing on Tuesdays.